Want to build a product users love? Start with feedback.

Founders often assume they understand what users need, but post-launch feedback tells a different story. It’s not just about collecting opinions – it’s about organizing, prioritizing, and acting on them to refine your product. Here’s what successful founders do:

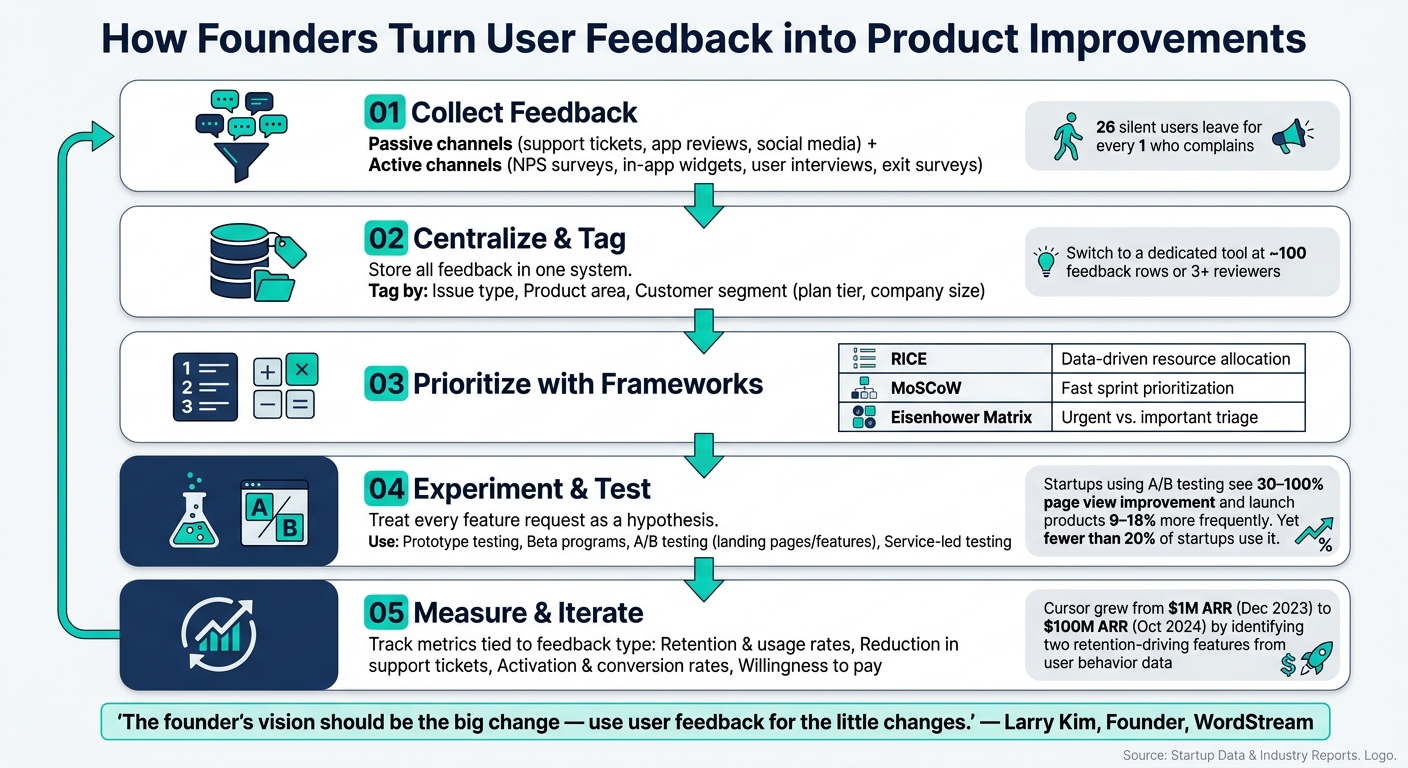

- Gather feedback effectively: Use active methods like surveys and interviews, and passive channels like support tickets and social media.

- Centralize insights: Store feedback in one place, tagging it by issue type, product area, and customer segment.

- Prioritize smartly: Frameworks like RICE and MoSCoW help decide what matters most.

- Experiment before scaling: Test ideas on a small scale to validate changes.

- Balance user input with vision: Feedback shapes improvements, but founders must stay focused on long-term goals.

Key takeaway: Feedback isn’t just noise – it’s a tool for smarter decisions when paired with a clear strategy.

How Founders Turn User Feedback into Product Improvements

Former YC CEO Michael Seibel explains the goals of a pre-launch startup

sbb-itb-772afe6

How Founders Collect and Organize Feedback

Gathering and making sense of feedback takes a thoughtful approach. Many startup tech leaders emphasize that this process is critical for long-term growth. Founders often start with informal user insights and later adopt more structured methods to refine their process.

Feedback Channels and Tools

To understand their users better, founders rely on a mix of passive and active feedback channels. Passive methods include support tickets, app store reviews, and social media mentions on platforms like X or Reddit. These channels capture feedback users share voluntarily. On the other hand, active methods – such as NPS surveys, in-app widgets, user interviews, and exit surveys for churned users – allow founders to actively seek out insights rather than waiting for them to surface.

Each method has its strengths and limitations. Passive channels often highlight urgent issues but can disproportionately reflect the opinions of the most vocal users. Meanwhile, active channels are better at uncovering feedback from quieter users who might disengage without ever voicing concerns. Research shows that for every one customer who complains, 26 others leave silently for the same reason.

The tools used to collect feedback also play a big role in shaping the insights founders gather. Tobias Whetton, co-founder of Supernotes, offers a great example. His team uses Tally forms with custom CSS and their own "SN Pro" typeface to create a seamless feedback experience. They focus exclusively on open-ended questions rather than dropdowns or multi-select options:

"We actually have no dropdowns or multi-selects. It’s all open-ended questions, which gives us much more valuable insights."

This approach helped Supernotes identify "spaced repetition" and "PDF attachments" as their most-requested features, which were then added to the product roadmap.

Centralizing Feedback for Action

Once feedback is collected, the next challenge is organizing it effectively. Using multiple channels like Slack, Intercom, spreadsheets, and CRMs can lead to fragmented data, making it hard to spot patterns or act quickly. The solution? A single, centralized system where all feedback is stored and tagged by type (e.g., feature request, bug, UX issue), product area (e.g., onboarding, billing), and customer segment (e.g., plan tier, company size).

Connecting feedback with customer metadata adds another layer of insight. For instance, knowing whether a request comes from a high-revenue account or a free-tier user can help prioritize which feedback to address first. Hannah Chaplin, CEO of Receptive.io, warns about the risk of prioritizing the wrong voices:

"You can end up having a lot of noisy demanding customers which drown out other voices… they’re not necessarily the customers you should be listening to based on where you need to take the product."

While spreadsheets might work in the early stages, they quickly become unwieldy as the volume of feedback grows. A good rule of thumb: once you have around 100 rows of feedback or more than three team members reviewing it, it’s time to consider a dedicated feedback management tool. Magnus Health tackled this issue by introducing "Feature Request Friday", a weekly 30-minute session where the executive team reviewed feedback and decided what to prioritize. This routine not only kept the process manageable but also surfaced ideas inspired by other industries, doubling as informal competitive research.

Centralizing feedback creates a foundation for smarter, data-driven decisions. By organizing user insights in one place, founders can better understand customer needs and make strategic updates to their product.

Using Feedback to Shape the Product Roadmap

Once feedback is centralized, the next step for founders is turning those insights into actionable product strategies. Gathering feedback is just the starting point – the real challenge lies in deciding what to prioritize. With a flood of user requests, founders need a system to figure out which changes will have the biggest impact and which can wait.

Prioritization Frameworks

To make sense of all the feedback, many founders rely on structured frameworks to rank ideas objectively. Commonly used frameworks include RICE, MoSCoW, and the Eisenhower Matrix. Each has its strengths and works best at different stages of the product development cycle:

| Framework | Inputs | Best Use Case | Limitations |

|---|---|---|---|

| RICE | Reach, Impact, Confidence, Effort | Ideal for data-driven environments where resource allocation is key | Relies on accurate estimates; can be time-intensive |

| MoSCoW | Must have, Should have, Could have, Won’t have | Useful for quick prioritization during sprints or specific releases | Risk of "Must have" overload if not managed carefully |

| Eisenhower Matrix | Urgency vs. Importance | Best for separating immediate fixes from long-term goals | Doesn’t factor in complexity or development effort |

Each framework has its place. For example, RICE excels when you have reliable data to guide decisions. MoSCoW is faster but leans more on subjective judgment, and the Eisenhower Matrix is perfect for triaging urgent tasks, though it’s less helpful for comparing competing feature requests. Many founders combine these frameworks based on the situation.

While these tools help quantify priorities, good judgment is still key to making the right calls.

Balancing Feedback and Vision

Frameworks can rank ideas, but they can’t replace a founder’s intuition and long-term vision. The real danger isn’t ignoring feedback – it’s letting the loudest voices steer the product in the wrong direction. As Hannah Chaplin, CEO of Receptive.io, explains:

"If you don’t have that strong foundation and vision for the business or for the product, then you have absolutely no reference. You can have all the data in the world, but it won’t make a difference."

While users often highlight genuine pain points, their proposed solutions tend to focus on small, incremental changes. A founder’s role is to dig deeper, identifying the core problem rather than simply acting on surface-level requests.

One way to stay focused is by maintaining a public-facing roadmap that outlines your strategic goals. This transparency helps users understand your direction, making it easier to decline requests that don’t align without causing frustration. When users see where the product is heading, a "no" feels less personal.

It’s also helpful to segment feedback by customer type. For instance, input from high-value enterprise clients may carry more weight than feedback from free-tier users. This approach ensures that the product evolves in alignment with strategic priorities.

Running Experiments Based on Feedback

After launching a product, the real work begins: refining and improving it based on user feedback. But not every piece of feedback should lead to immediate changes. Experimentation is the key to figuring out which ideas actually make a difference. By testing changes on a smaller scale, founders can pinpoint what works before committing to a full-scale rollout.

Experimentation Techniques

Treat every feature request or idea as a hypothesis to be tested, not a guaranteed solution. This approach ensures that the product evolves in line with its overall vision, rather than being shaped solely by individual user demands.

Steve Sewell, Co-founder and CEO of Builder.io, demonstrated this approach in 2019. To validate a complete product rebuild, he partnered with his former employer, ShopStyle. He personally built their new homepage and offered free coding services in exchange for a modest $90/month platform fee. This "design partner" strategy provided a real-world testing environment, drastically reducing the time needed for validation. As Sewell explains:

"I was able to shorten the validation cycle from 12 months to as little as 12 hours." – Steve Sewell, Co-founder and CEO, Builder.io

Other founders take similar approaches, using prototype testing to observe real user interactions and uncover friction points that raw data might overlook. Some even test demand by creating landing pages with explainer videos and signup forms before investing time in development.

Once an experiment is underway, setting clear metrics is essential to measure its success.

Measuring the Impact of Iterations

Testing without tracking results is like flying blind. The success of an experiment hinges on defining the right metrics, which depend on the type of feedback being addressed.

Cursor (Anysphere) offers a great example of data-driven iteration. After launching their AI-native code editor MVP in early 2023, they noticed a sharp drop in usage following an initial surge. By closely analyzing user behavior, the team identified two features – Cmd+K instructed edits and Codebase Indexing – that significantly improved retention. As a result, Cursor‘s revenue skyrocketed from $1M ARR in December 2023 to $100M ARR by October 2024.

Here’s a quick guide connecting feedback types to experimentation methods and success metrics:

| Feedback Type | Experiment Technique | Primary Success Metric |

|---|---|---|

| Low engagement | Iterative MVP testing | Retention & usage rates |

| Usability issues | Qualitative user observation | Reduction in friction or support tickets |

| Market fit doubt | Service-led testing (nominal fee) | Willingness to pay |

| Feature usability | Prototype / beta program | Activation rate / qualitative feedback |

| Doubt over value proposition | A/B testing (landing pages/features) | Activation rate / conversion rate |

A/B testing is particularly powerful. Startups using this method to validate features often see page views improve by 30% to 100% over a year and launch new products 9% to 18% more frequently. Yet, fewer than 20% of startups actually leverage A/B testing.

The takeaway? If users repeatedly return to a flawed product, it’s a strong signal that the problem being solved is important. As Steve Sewell wisely points out:

"If you made a really crappy product, are people still using it anyway? That’s a good sign that you’re solving a big enough problem." – Steve Sewell, Co-founder and CEO, Builder.io

Lessons from Founders: Patterns and Takeaways

Common Patterns in Feedback and Iteration

Founders often encounter recurring themes as they navigate the challenges of building and refining their products. One of the most telling patterns is this: when users stick with a flawed product, it highlights the problem’s importance. If people are willing to endure the rough edges, it’s a clear sign the solution addresses a pressing need.

Another recurring theme is the gap between assumptions and reality. Take Steve Sewell’s experience with Builder.io. After dedicating all of 2018 to developing the platform, his first live demo revealed a major misstep – he hadn’t centered his work around the customer’s needs. His response? A complete mindset shift:

"I drastically recalibrated and I removed the assumption from my head that I really knew anything. I needed to make sure that anything I thought I knew was just assumptions and hypothesis and I need to validate them." – Steve Sewell, Co-founder and CEO, Builder.io

Successful founders tend to shorten feedback loops significantly, moving from lengthy validation cycles to mere days or even hours. Early on, they rely heavily on qualitative signals, focusing on how users actually interact with their product rather than just analyzing metrics. This hands-on understanding of user behavior becomes a critical guide when addressing negative feedback.

Turning Negative Feedback into Action

Negative feedback can be tough to hear, but for many founders, it’s the catalyst for transformative change. Instead of seeing criticism as a setback, they treat it as an opportunity to refine their approach.

For instance, Ravin Thambapillai, founder of Credal, faced a harsh reality in early 2025. A pilot company’s executives offered just $20/month for a product priced at $2,000/month. Instead of making minor adjustments, he dug deeper into sales conversations. The real issue? Customers were concerned about AI security, not the "AI chief of staff" concept Credal had focused on. This insight led to a complete pivot, driving 20–30% monthly growth in enterprise sales and landing major clients.

"Maybe the sort of takeaway from that is that actually the most important thing to do right is to actually sort of be reflective, embrace the reality of what’s not working, and then act decisively to course correct." – Ravin Thambapillai, Founder, Credal

The table below outlines common types of negative feedback, their root causes, and how founders typically address them:

| Type of Negative Feedback | Root Cause | Typical Founder Response |

|---|---|---|

| Product "misses the mark" during demo | Building based on assumptions, not customer empathy | Scrap and rebuild a lean MVP based on direct user input |

| Order-of-magnitude valuation gap | Product doesn’t solve a high-value problem | Pivot decisively; follow secondary user interest signals |

| Low adoption of new features | High switching costs or rigid user perceptions | Refine positioning; focus on user education |

| Consistent interest in a secondary feature | Core product is "nice-to-have"; minor feature solves a "must-have" pain point | Shift focus to the secondary feature as the main offering |

| Internal technical failure | Infrastructure gaps exposed under real-world use | Investigate for potential new market opportunities |

In each of these scenarios, incremental changes won’t cut it when facing structural challenges. Founders who succeed are those willing to take bold, decisive steps based on clear signals – even if it means scrapping months of hard work. These actions, though difficult, often lead to breakthroughs in leveraging user insights for meaningful progress.

Conclusion: Key Takeaways on Feedback-Driven Iteration

Founders agree: feedback only brings results when it’s structured, actionable, and tied to a clear product vision. Gathering opinions without a system to organize and prioritize them? That’s just noise. The key lies in creating repeatable processes to turn user feedback into meaningful improvements.

A few principles stand out throughout the feedback cycle. First, centralizing input – whether through a Slack channel or a tool like Atlassian Product Discovery – ensures the whole team stays aligned. Next, tagging feedback by user cohort (like payment plans, feature usage, or company size) helps separate useful insights from background chatter. Finally, evaluating every request against the product’s Unique Value Proposition prevents the roadmap from becoming cluttered with unnecessary features.

Balancing a strong long-term vision with user feedback sharpens execution. Larry Kim, Founder of Wordstream, sums it up perfectly:

"The founder’s vision should be the big change and use user feedback for the little changes."

A great example of this balance is Customer.io. Founders Colin Nederkoorn and his co-founder initially built a general analytics tool. But after hearing users wanted to influence behavior rather than just track it, they pivoted to a behavior-triggered email platform. Starting with just five companies paying $10/month, they grew to $50 million in ARR by 2024. This mix of vision and adaptability drives ongoing growth and discovery.

For more stories like this, check out the Code Story podcast. Hosted by Noah Labhart, it dives into candid discussions with tech leaders about building and scaling digital products, including how they handle feedback and make pivotal decisions.

FAQs

How do I know which feedback is worth acting on?

To spot feedback that truly matters, concentrate on its relevance, consistency, and how well it aligns with your product’s vision. Feedback that highlights user pain points or directly touches on core features usually holds the most weight. Look for patterns – when multiple users bring up the same issues, it’s a sign worth noting. Prioritize insights from your most engaged or ideal customers, as their input often reflects the needs of your target audience. On the flip side, avoid overreacting to one-off comments from users who don’t represent your broader customer base. The key is focusing on feedback that leads to meaningful updates and supports your strategic objectives.

What’s the simplest way to centralize feedback early on?

Collecting feedback directly from users through active research and observation is one of the most straightforward approaches. It allows product teams to gain meaningful insights and make adjustments that enhance the product’s overall quality and user experience.

Which metrics should I use to judge an iteration?

To assess an iteration’s success, keep an eye on metrics that truly matter, such as user engagement, customer feedback, and key performance indicators (KPIs). Important KPIs might include retention rates, conversion rates, and feature adoption. These metrics help gauge how well the iteration meets user needs and improves the product overall.